Artur

AI Driving and GTA 5

Introduction

Self driving cars have been steadily gaining momentum in academia and industry since the 2005 DARPA Challenge. Many companies are now aiming for commercial fleets of self driving cars by the end of the decade. One major technical challenge on the road to that goal is reliable and robust perception of the driving scene. Human drivers predominantly use their eyes to detect lanes, signs, pedestrians, and other cars. They also are able to estimate distances to these objects. Computers have yet to duplicate this capability. Part of the reason why computers cannot extract the same information as humans from a driving scene is a lack of data to train them. While there exists plenty of video footage of driving, videos with appropriate and accurate annotations - such as distances to lane markings in each frame - are rare. These annotations are difficult to obtain in the real world. However, I'm looking into the use of video games to obtain data needed to train computers to drive. Specifically, video games allow automated scene generation, image collection, and measurement of distances.

Results

I'm working on extracting data from Grand Theft Auto 5 (GTA 5). While the game is closed source which presents several difficulties, the rich road network, realistic environment and time and weather control allow for the creation of data sets with a variety of conditions seen in the real world. For a detailed description of the game and methods for extracting images, bounding boxes, pixel maps, and lane markings please see Video Games for Autonomous Driving.pdf. Many of the methods described are yet to be implemented and my code could use a true computer scientist's touch. Please reach out if you would like to contribute.

Application 1: Estimating Distances to Stop Signs

The first application I'm exploring is detection of stop signs and estimation of distance to stop signs. Using GTA 5, we collected 1.4 million images with and without stop signs under different weather and time conditions. We trained a convolutional neural network to predict the presence of the stop sign and estimate the distance. On game images, the network works well within 20 meters of the stop sign, detecting 95.5% of the stop signs with an average error in distance of 1.2 m to 2.4 m. This is promising for localizing stop signs when approaching intersections.The next major step is to test this network on real world data. For more information please see Using_GTAV_to_Learn_Distances_TRB_Final.pdf.In a follow up research, I've produced a thesis exploring this problem. This thesis explores the interaction between virtual reality simulation and Deep Learning which may develop computer vision that rivals human vision. Again, Grand Theft Auto 5 is used to collect over half a million images and corresponding ground truth labels with and without stop signs in various lighting and weather conditions. A deep convolutional neural network trained on this data and fine tuned on real world data achieves accuracy in stop sign detection of over 95% within 20 meters of the stop sign and has a false positive rate of 4% on test data from the real world. Additionally, the physical constraints on this problem are analysed, a framework for the use of simulators is developed, and domain adaptation and multi-task learning are explored. For full thesis text please see Filipowicz_Artur_Thesis.pdf. For summary presentations of results please see Filipowicz_Artur_Thesis_Presentation.pdf and Filipowicz_Bhat_SmartDrivingCar_Summit_Presentation.pdf. The other thesis discussed in the second presentation can be found here [1].

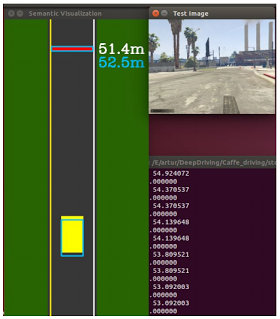

Application 2: Testing Deep Driving [2] CNN

A Deep Driving convolutional neural network driving a car in GTA 5. These videos show very early tests of using GTA 5 for testing the performance of driving agents in real time. The blue dot represents the point where the AI would like to go based on its estimates of the heading of the road. Please see Appendix A of Strategies for Training of Deep Neural for Driving.pdf.aTaxis and the Future of Mobility

Looking beyond individual vehicles, the following report examines ways of managing fleets of autonomous vehicles (aTaxis) in the state of New Jersey with an extension to New York City. http://orfe.princeton.edu/~alaink/NJ_aTaxiOrf467F15/Orf467F15FinalReport/ORF467F15FinalReport_aTaxisForNJ-A1stLookAtWhatItWouldTake.pdfVisual Congestion Detection

In a related line of research, we examine a new approach to the problem of real-time traffic congestion detection based on single image analysis. We demonstrate the of a convolutional neural network in this domain. With this learning model and the direct perception approach, transforming an image directly to a congestion indicator, we design a system which can detect congestion independently of location, time and weather. We further demonstrate that the use of the Fast Fourier Transform and wavelet transform can improve the accuracy of a convolutional neural network across multiple conditions in new locations. Direct Perception for Congestion Scene Detection.pdfCode

https://github.com/arturf1/GTA5-ScriptsPapers

Related Literature

http://deepdriving.cs.princeton.edu/Project focusing on reinforcement learning in GTA 5

http://deepdrive.io/

A method for computing lane marking locations in GTA 5

https://github.com/singularsparkle/DeepGTAV/blob/master/GTAVLaneMarkingDetection.pdf

http://taskandpurpose.com/video-games-actually-make-better-soldier/

GTA 5 used for image segmentation:

http://download.visinf.tu-darmstadt.de/data/from_games/index.html

https://www.youtube.com/watch?v=JGAIfWG2MQQ

Here is an informative newsletter for general news about autonomous vehicles.

http://smartdrivingcar.com/newsletter.html